Hello. I’m Inoue, and I work on private cloud infrastructure at LY Corporation.

What powers LY Corporation’s massive volume of traffic and data is a large-scale private cloud that we build and operate ourselves. Today, we’re in the middle of a major transition: consolidating two large legacy platforms, ex-LINE’s "Verda" and ex-Yahoo’s "YNW", an infrastructure as a service (IaaS) platform, into the next-generation platform, "Flava".

| Verda | YNW | Flava | |

|---|---|---|---|

| Service launch | 2016 | 2013 | 2025 |

| Hypervisors (physical servers) | 11,000+ | 27,000+ | 500+ |

| Virtual machines (VMs) | 130,000+ | 220,000+ | 9,000+ |

| OpenStack cluster count | 4 | 160+ | 1+ |

| Object storage capacity (petabytes (PB)) | 200+ | 100+ | 5+ |

In this post, we’ll take a closer look at the design principles and core technologies that keep this enormous pool of resources running efficiently, without interruption, and at the future that "Flava" is designed to enable.

This article focuses on the technical stack in the foundational layers of our cloud: infrastructure as a service (IaaS), networking, and storage. Higher layers that run on top, such as Kubernetes as a service (KaaS), database as a service (DBaaS), and platform as a service (PaaS), will be covered in an upcoming post.

Design principles and operations

Our cloud is designed and operated based on the following principles.

Designing for failure (and using it that way)

To maximize the speed of service development, we avoid over-investing in availability guarantees at the infrastructure layer alone. Instead, we assume failures can happen at any time. Concretely, our design is built around three pillars.

-

Pursuing statelessness

We define data stored on a virtual machine’s (VM) root disk (ephemeral disk) as temporary. We move persistent data to external storage to minimize service impact when an instance fails. -

Application-driven availability

Rather than attempting to provide perfect availability through infrastructure alone, we ensure reliability by combining infrastructure with application-side architecture, reducing unnecessary infrastructure complexity. -

Faster recovery

In an incident, the priority is not restoring the exact previous state. It’s keeping the service running. We recommend an operational approach that rebuilds environments quickly using Infrastructure as Code (IaC), rather than spending extended time on root-cause analysis first.

Making this design philosophy work requires understanding and cooperation from our users. That’s why we continuously invest in education and consulting on failure-aware design. We also encourage adoption of more abstracted environments such as KaaS and PaaS, so developers can build resilient services without having to think about every detail.

Advancing operations and monitoring

To operate a large-scale cloud reliably with a small team, we focus on deep automation and establishing strong observability.

End-to-end automation and governance with IaC

From operating system (OS) settings to package installation and network provisioning, we codify all configuration management and enforce automated rollout through a continuous integration/continuous delivery (CI/CD) pipeline. We also deploy in availability zone (AZ) units, building in a safety mechanism that keeps the blast radius contained if anything goes wrong.

Observability that sees both the forest and the trees

To accurately understand what’s happening in a large environment, we maintain tools specialized for both macro-level monitoring and micro-level investigation. Engineers use them to move seamlessly between "forest" and "tree" perspectives, depending on the situation.

- The forest (macro view)

Using Prometheus, Grafana, and internal dashboards, we continuously monitor overall cloud health and trends to catch early signs of anomalies. - The trees (micro view)

When an anomaly is detected, we drill into deep signals such as kernel-level traces and packet captures to pinpoint the cause.

This set of tools, along with the engineering skill to use them effectively and trace from the big picture down to root cause, is the foundation of stable operations.

Technology and mindset behind large-scale cloud

Across every domain of the cloud, we take a software-defined approach. We grow alongside open source software (OSS) communities and maintain a consistent stance: if something we need doesn’t exist, we build it ourselves.

Using and contributing to OSS

Our platform is built around OSS such as OpenStack, Envoy, the Linux kernel (including extended Berkeley packet filter (eBPF) and express data path (XDP)), FRRouting (FRR), and Ceph. We don’t just use them. We continuously contribute back to the community.

For example, we keep providing fixes upstream for OpenStack and Ceph (Example 1, Example 2, Example 3, Example 4). In networking, we designed and implemented capabilities related to segment routing over IPv6 (SRv6) and border gateway protocol (BGP) needed for Flava’s virtual private cloud (VPC), and we have contributed commits to FRRouting and the Linux kernel (Example 1, Example 2, Example 3, Example 4).

When we need functionality for our workloads, we don’t stop at maintaining private forks or local patches. We implement it in the upstream OSS itself. We believe this approach avoids long-term maintenance costs for proprietary patches while helping the global community evolve the technology.

Software-defined technologies that push commodity hardware to its limits

From the perspectives of performance, cost, and flexibility, we minimize reliance on expensive purpose-built appliances. Not only do we run IaaS compute nodes on commodity x86 servers, but we also operate network products such as VPC, DNS, and Load Balancer on general-purpose x86 servers.

Where high performance is required, we apply rigorous engineering practices such as high-speed data plane implementations using XDP, hardware offloading, and tuning to aim for near wire-speed throughput and extremely low latency on commodity servers.

Full-scratch development to close the gaps

For internal challenges that OSS alone cannot fully address, we build in-house solutions from scratch.

Examples

-

Object storage Dragon

A proprietary object storage system designed with HDD capacity efficiency and operability as the top priorities -

Network control and supporting tools

Core operational components, such as a software defined networking (SDN) control plane, load balancer health check agents, and service discovery, are implemented in Rust, Go, Python, and more

Autonomous operations designed for hardware failures

In an environment with tens of thousands of hypervisors and petabyte-scale storage, hardware failures happen somewhere every day. It’s impossible to handle all of them manually. Today, we’ve automated most of the flow, from failure detection to requesting on-site data center work and reintegrating replaced hardware back into clusters.

That said, some tasks and irregular failure patterns still require hands-on engineering response. Going forward, we aim to use large language models (LLMs) for these decision-heavy workflows as well, further advancing automation.

Evolving to the next-generation platform, "Flava"

"Flava" is our next-generation platform, launched in 2025 after development began in the second half of 2023, bringing together years of operational expertise from our legacy clouds. Adoption is already expanding, with close to 10,000 VMs created. To address many long-standing issues in the legacy platforms at their root, Flava introduces a wide range of improvements. We took advantage of the rare opportunity to rebuild infrastructure at this scale from the ground up. We chose a fundamental architectural redesign rather than incremental change.

Key improvements in Flava

Eliminating dedicated environments and moving to a single resource pool

In the legacy cloud, we had many dedicated environments (clusters, resource pools, and more) for specific products and services. This made capacity management puzzle-like and complex, while also creating challenges in resource utilization. To remove this complexity, Flava eliminates dedicated environments and is designed to absorb nearly all products and services into a single, massive resource pool. As a result, the number of variables in capacity planning drops dramatically, and both resource efficiency and agility, from procurement through delivery, improve significantly.

An upstream-aligned architecture that preserves maintainability

In the legacy cloud, too many custom modifications to OpenStack made upgrades difficult. Learning from that, Flava adopts an architecture that stays aligned with upstream OpenStack. We keep custom patches to a minimum, and when functional changes are needed, we proactively contribute them upstream so they can be merged into the main project. By removing upgrade barriers, we enable a regular update cadence and keep both security and the latest features continuously available.

VPC by default

To fundamentally raise the bar for network security, Flava makes VPC usage the default. This provides a robust security model by default in a multi-tenant environment, ensuring that tenants’ traffic does not interfere with one another.

Introducing VPCs also transformed how we build secure environments for handling sensitive information such as personal data. Previously, we required physical tenant isolation using firewalls and dedicated virtual local area networks (VLANs), and environment preparation could take months. With Flava, we achieve equivalent security through logical isolation with VPCs and coordinated integration across related products, enabling secure environments to be provisioned in just minutes.

At the same time, making VPCs the default at company scale is something we did not do in the legacy cloud. Flava is our first attempt. A VPC platform that processes all traffic must deliver exceptionally high reliability and performance. Leveraging operational expertise from the legacy cloud, we’re also pursuing fundamental performance improvements in parallel, including modernizing the VPC data plane with XDP.

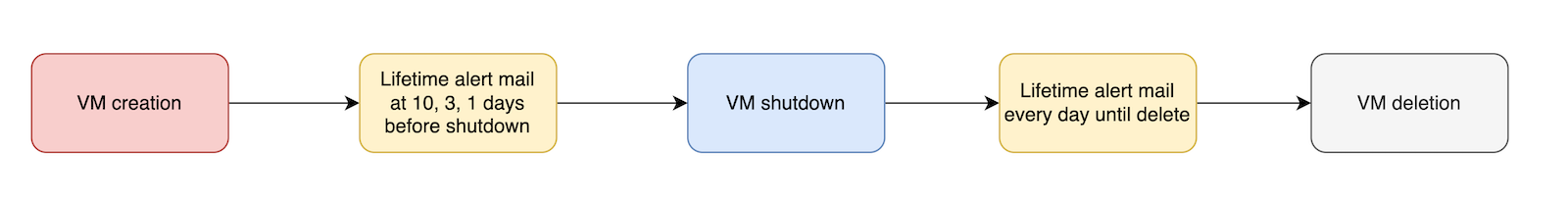

Cost optimization together with users

Flava also includes features that let users optimize costs autonomously. In development environments (from the cloud user’s perspective), we require setting a resource lifetime (expiration), and we automatically delete "zombie" resources.

For object storage, we offer multiple bucket classes such as "High Performance" and "Scalable" (high capacity, low cost). Users can switch classes later without changing endpoints. This enables dynamic optimization of cost and performance based on workload characteristics, such as changes in access frequency.

Challenges and opportunities we’re facing in Flava

At the moment, Flava is still at the stage where only the minimum set of products has been released, and expanding functionality is an urgent priority. Also, while we prioritized development speed, bugs and missed considerations can surface after release. We are addressing these quickly while continuing to mature the platform.

The biggest challenge, however, is migration from the legacy platforms. We reduce user burden through transparent migration paths and tooling, but some areas still inevitably require manual work. How do we shorten the period of overlapping investment across old and new platforms, and optimize total cost? Our challenge is only just beginning.

The team and culture behind the stack’s evolution

Finally, let’s talk about the team that continues to evolve this technology stack. Our team brings together members with diverse backgrounds across layers, from low-level specialists who read and reason about kernel code to engineers who craft management consoles in modern web front ends.

What we share is a mindset: we don’t treat infrastructure as a black box. We understand what’s inside and take ownership in controlling it. The deep engagement with OSS mentioned earlier, such as contributing patches upstream, is possible only because we have engineers with the skills to trace behavior at the source-code level. Even when we adopt commercial products, we don’t remain passive users; we work closely with vendors, discuss specifications in depth, and request improvements. This attitude of building a cloud we can control with our own hands is what drives LY Corporation’s private cloud forward.

Closing

In this post, we introduced our effort to unify two large clouds, developed independently by legacy LINE and legacy Yahoo, and renew them as the next-generation platform, "Flava". Our approach is to take full advantage of OSS such as OpenStack, while rebuilding or replacing parts that don’t fit our scale or requirements. Failure-aware design, deep automation with IaC, and technologies that extract the maximum performance from commodity hardware are capabilities we’ve built up to keep supporting LY Corporation’s massive traffic reliably.

We hope this article serves as a useful reference for anyone interested in designing large-scale infrastructure or in the technical stack behind private clouds.